Next, it was time to parse and evaluate the input URL. In order to get usable meta-data, I added this: og_url = html_page.find(“meta”, property = “og:url”)Īnd got something like this as a result: While another website had no og:title and had this instead: For example, one of the websites had this:

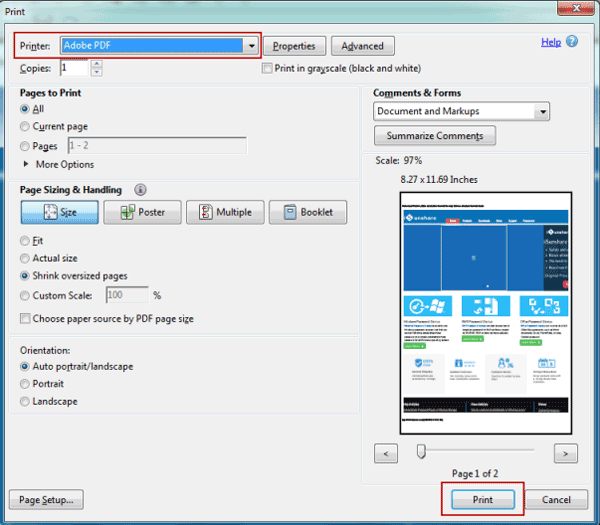

Upon evaluating the HTML code of both, I realized that the content of their meta tags was slightly different. Now, I had two main websites from which I occasionally downloaded pdf files. In order to get a properly formatted and humanly readable HTML source code, I tried doing this with BeautifulSoup, which is a Python package for parsing HTML and XML documents: html_page = bs(html, features=”lxml”) However, when I tried to print it on my console, it wasn’t a pleasant sight. In Python, HTML of a web page can be read like this: html = urlopen(my_url).read() Otherwise, the link is invalid and the program is terminated. If it can be opened using urlopen, it is valid. Using a simple try-except block, I check if the URL entered is valid or not. The idea was to input a link, scrap its source code for all possible PDF files and then download them. This sounded like a fun automation task and since I was eager to get my hands dirty with web-scraping, I decided to give it a try. One fine day, a question popped up in my mind: why am I downloading all these files manually? That’s when I started searching for an automatic tool. Downloading hundreds of PDF files manually was…tiresome.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed